High level software architecture for autonomous mobile robot

High level software architecture for autonomous mobile robot

High level software architecture for autonomous mobile robot

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

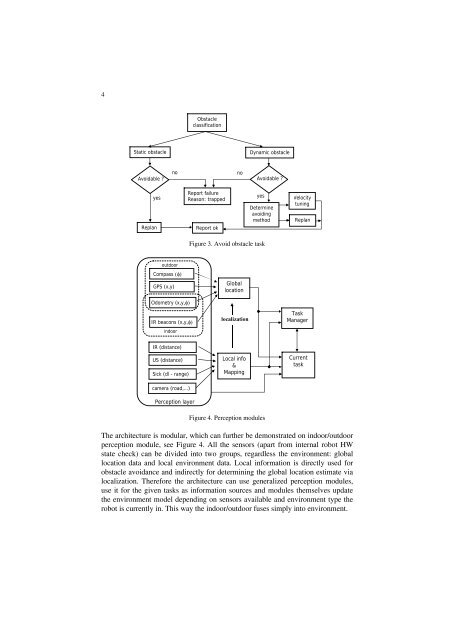

4ObstacleclassificationStatic obstacleDynamic obstacleAvoidable ?nonoAvoidable ?yesReplanReport failureReason: trappedReport okyesDetermineavoidingmethodVelocitytuningReplanFigure 3. Avoid obstacle taskoutdoorCompass (φ)GPS (x,y)GloballocationOdometry (x,y,φ)IR beacons (x,y,φ)indoorlocalizationTaskManagerIR (distance)US (distance)Sick (dl - range)Local info&MappingCurrenttaskcamera (road,…)Perception layerFigure 4. Perception modulesThe <strong>architecture</strong> is modular, which can further be demonstrated on indoor/outdoorperception module, see Figure 4. All the sensors (apart from internal <strong>robot</strong> HWstate check) can be divided into two groups, regardless the environment: globallocation data and local environment data. Local in<strong>for</strong>mation is directly used <strong>for</strong>obstacle avoidance and indirectly <strong>for</strong> determining the global location estimate vialocalization. There<strong>for</strong>e the <strong>architecture</strong> can use generalized perception modules,use it <strong>for</strong> the given tasks as in<strong>for</strong>mation sources and modules themselves updatethe environment model depending on sensors available and environment type the<strong>robot</strong> is currently in. This way the indoor/outdoor fuses simply into environment.