improving music mood classification using lyrics, audio and social tags

improving music mood classification using lyrics, audio and social tags

improving music mood classification using lyrics, audio and social tags

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

systems <strong>and</strong> the <strong>audio</strong>-only system was statistically significant, but the difference between<br />

hybrid systems <strong>and</strong> the lyric-only system was not statistically significant.<br />

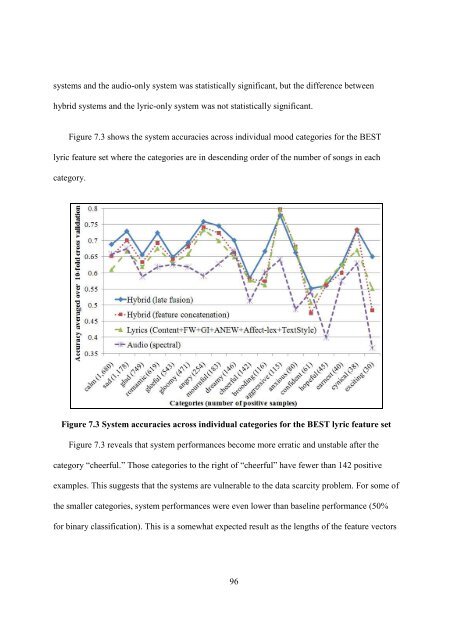

Figure 7.3 shows the system accuracies across individual <strong>mood</strong> categories for the BEST<br />

lyric feature set where the categories are in descending order of the number of songs in each<br />

category.<br />

Figure 7.3 System accuracies across individual categories for the BEST lyric feature set<br />

Figure 7.3 reveals that system performances become more erratic <strong>and</strong> unstable after the<br />

category “cheerful.” Those categories to the right of “cheerful” have fewer than 142 positive<br />

examples. This suggests that the systems are vulnerable to the data scarcity problem. For some of<br />

the smaller categories, system performances were even lower than baseline performance (50%<br />

for binary <strong>classification</strong>). This is a somewhat expected result as the lengths of the feature vectors<br />

96