- Page 1 and 2: Tutorial CUDA Cyril Zeller NVIDIA D

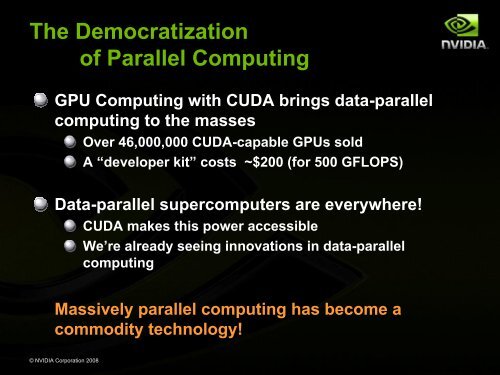

- Page 3 and 4: © NVIDIA Corporation 2008 GPU Comp

- Page 5 and 6: Parallel Computing’s Dark Age But

- Page 7 and 8: Enter the GPU GPU = Graphics Proces

- Page 9: Enter CUDA CUDA is a scalable paral

- Page 13 and 14: GPUs Are Getting Faster, Faster ©

- Page 15 and 16: © NVIDIA Corporation 2008 CUDA Pro

- Page 17 and 18: Heterogeneous Programming CUDA = se

- Page 19 and 20: Hierarchy of Concurrent Threads Thr

- Page 21 and 22: Memory Hierarchy Sequential Kernels

- Page 23 and 24: CUDA Language: C with Minimal Exten

- Page 25 and 26: Example: Increment Array Elements C

- Page 27 and 28: Example: Host Code // allocate host

- Page 29 and 30: More on Memory Spaces Each thread c

- Page 31 and 32: Compiling CUDA for NVIDIA GPUs Any

- Page 33 and 34: Device Emulation Mode Pitfalls Emul

- Page 35 and 36: Reduction Exercise At the end of ea

- Page 37 and 38: Reduce 1: Blocking the Data Block I

- Page 39 and 40: Reduce 1: Multi-Pass Reduction Bloc

- Page 41 and 42: © NVIDIA Corporation 2008 CUDA Imp

- Page 43 and 44: Hardware Implementation: A Set of S

- Page 45 and 46: Hardware Implementation: Execution

- Page 47 and 48: Host Synchronization All kernel lau

- Page 49 and 50: Multiple CPU Threads and CUDA CUDA

- Page 51 and 52: Performance Optimization Expose as

- Page 53 and 54: Expose Parallelism: CPU/GPU Paralle

- Page 55 and 56: Minimize CPU ↔ GPU Data Transfers

- Page 57 and 58: Global Memory Reads/Writes Global m

- Page 59 and 60: Coalesced Global Memory Accesses ©

- Page 61 and 62:

Non-Coalesced Global Memory Accesse

- Page 63 and 64:

Avoiding Non-Coalesced Accesses For

- Page 65 and 66:

Profiler Signals Events are tracked

- Page 67 and 68:

Back to Reduce Exercise: Profile wi

- Page 69 and 70:

Reduce 2 Thread IDs Block IDs Distr

- Page 71 and 72:

Maximize Use of Shared Memory Share

- Page 73 and 74:

Example: Square Matrix Multiplicati

- Page 75 and 76:

Example: Avoiding Non-Coalesced flo

- Page 77 and 78:

Example: Avoiding Non-Coalesced flo

- Page 79 and 80:

Example: Avoiding Non-Coalesced flo

- Page 81 and 82:

Execution Configuration: Constraint

- Page 83 and 84:

Execution Configuration: Heuristics

- Page 85 and 86:

Back to Reduce Exercise: Problem wi

- Page 87 and 88:

Parallel Reduction Complexity Takes

- Page 89 and 90:

Reduce 3: Go Ahead! Open up reduce\

- Page 91 and 92:

Arithmetic Instruction Throughput f

- Page 93 and 94:

Runtime Math Library There are two

- Page 95 and 96:

What You Need To Know FP64 instruct

- Page 97 and 98:

Mixed Precision Arithmetic Research

- Page 99 and 100:

Single Precision Floating Point ©

- Page 101 and 102:

Instruction Predication Comparison

- Page 103 and 104:

Shared Memory Is Banked Bandwidth o

- Page 105 and 106:

Bank Addressing Examples 2-way bank

- Page 107 and 108:

Back to Reduce Exercise: Problem wi

- Page 109 and 110:

Reduce 3: Bank Conflicts Showed for

- Page 111 and 112:

Reduce 4: Go Ahead! Open up reduce\

- Page 113 and 114:

Reduce 5: Unrolled Loop if (numThre

- Page 115 and 116:

Reduce 5: Final Unrolled Loop if (n

- Page 117 and 118:

Coming Up Soon CUDA 2.0 © NVIDIA C

- Page 119 and 120:

Extra Slides

- Page 121 and 122:

Applications - Condensed 3D image a

- Page 123 and 124:

Performance GPU Electromagnetic Fie

- Page 125 and 126:

EvolvedMachines 130X Speed up Brain

- Page 127 and 128:

nbody Astrophysics Astrophysics res

- Page 129 and 130:

A quick review device = GPU = set o

- Page 131 and 132:

Language Extensions: Function Type

- Page 133 and 134:

Language Extensions: Execution Conf

- Page 135 and 136:

Common Runtime Component Provides:

- Page 137 and 138:

Common Runtime Component: Mathemati

- Page 139 and 140:

Host Runtime Component Provides fun

- Page 141 and 142:

Host Runtime Component: Memory Mana

- Page 143 and 144:

Host Runtime Component: Interoperab

- Page 145 and 146:

Host Runtime Component: Error Handl

- Page 147 and 148:

Device Runtime Component: Mathemati

- Page 149 and 150:

Device Runtime Component: Texture F

- Page 151 and 152:

Compilation Any source file contain

- Page 153 and 154:

Role of Open64 Open64 compiler give

- Page 155 and 156:

CUDA Libraries CUBLAS CUFFT © NVID

- Page 157:

CUFFT Library Efficient implementat