BDS market development guide.pdf - PACA

BDS market development guide.pdf - PACA

BDS market development guide.pdf - PACA

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

71<br />

• Donors may be interested in both of these perspectives but, given their immediate<br />

exposure to more political pressures, may also want to prove that public funds are<br />

generating employment gains in SMEs or having a wider positive impact on society.<br />

A major problem associated with monitoring and evaluation in <strong>BDS</strong> (and in other spheres) is<br />

a blurring of improve and prove agendas. Practical management decision-making,<br />

accountability, and learning purposes are lumped uncomfortably together. Characteristically,<br />

this can lead, on the one hand, to organizations not getting the information they need to make<br />

rational decisions and, on the other, to half-hearted (and doomed) attempts to measure impact<br />

that do not prove impact because there are so many caveats associated with the data.<br />

Underpinning new approaches to monitoring and evaluation in <strong>BDS</strong> <strong>market</strong> <strong>development</strong><br />

(Figure 17) is a new and candid transparency over why we measure.<br />

• Most monitoring and evaluation is concerned with improving performance; this should be<br />

the immediate priority and be used on a regular basis. Common methods— <strong>market</strong>research<br />

tools, case studies, provider financial analysis, and surveys—are focused on this<br />

goal.<br />

• Monitoring and evaluation methods that can result in valid, generalizable findings—<br />

proving impact—that take account of attribution are difficult and expensive. If one is<br />

serious about proving impact there is no real alternative to these; for most organizations,<br />

these kinds of studies will not be possible or justifiable.<br />

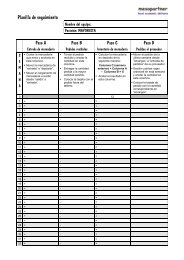

Figure 17: Why We Measure in <strong>BDS</strong>: Emerging Trends<br />

The Old<br />

The purposes of monitoring and<br />

evaluation often ill-defined and<br />

ambiguous<br />

In particular, the objectives of<br />

improving performance and<br />

proving impact often blurred<br />

Result?—neither objective is<br />

achieved<br />

Recognition that:<br />

The New<br />

• Prove and improve objectives<br />

are both valid but are different<br />

• Reasons for monitoring and<br />

evaluation (why) “drive” choice<br />

of indicators (what) and<br />

methods (how)<br />

Consequently we need:<br />

• Greater clarity in monitoring and<br />

evaluation objectives<br />

• Separation of improve and<br />

prove approaches to monitoring<br />

and evaluation<br />

OUTSTANDING ISSUES<br />

Although the general trends and overall direction in monitoring and evaluation in <strong>BDS</strong> are<br />

clear, there are still many aspects where there is no consensus among agencies and where<br />

Chapter Five—How do We Assess Our Performance?—<br />

Monitoring and Evaluation <strong>BDS</strong> Interventions