pink-book

pink-book

pink-book

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

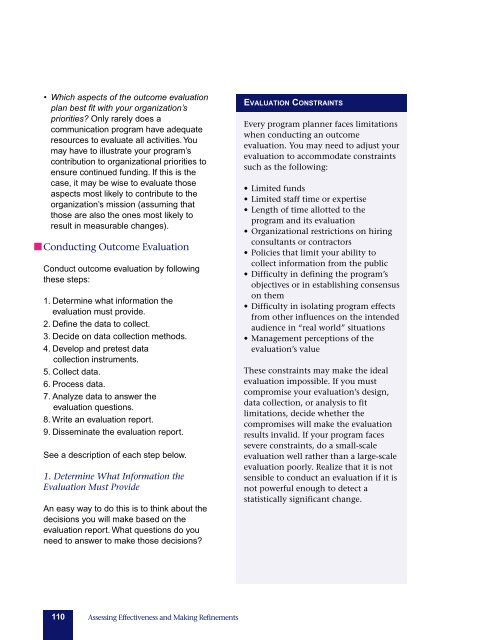

• Which aspects of the outcome evaluation<br />

plan best fit with your organization’s<br />

priorities Only rarely does a<br />

communication program have adequate<br />

resources to evaluate all activities. You<br />

may have to illustrate your program’s<br />

contribution to organizational priorities to<br />

ensure continued funding. If this is the<br />

case, it may be wise to evaluate those<br />

aspects most likely to contribute to the<br />

organization’s mission (assuming that<br />

those are also the ones most likely to<br />

result in measurable changes).<br />

Conducting Outcome Evaluation<br />

Conduct outcome evaluation by following<br />

these steps:<br />

1. Determine what information the<br />

evaluation must provide.<br />

2. Define the data to collect.<br />

3. Decide on data collection methods.<br />

4. Develop and pretest data<br />

collection instruments.<br />

5. Collect data.<br />

6. Process data.<br />

7. Analyze data to answer the<br />

evaluation questions.<br />

8. Write an evaluation report.<br />

9. Disseminate the evaluation report.<br />

See a description of each step below.<br />

1. Determine What Information the<br />

Evaluation Must Provide<br />

An easy way to do this is to think about the<br />

decisions you will make based on the<br />

evaluation report. What questions do you<br />

need to answer to make those decisions<br />

EVALUATION CONSTRAINTS<br />

Every program planner faces limitations<br />

when conducting an outcome<br />

evaluation. You may need to adjust your<br />

evaluation to accommodate constraints<br />

such as the following:<br />

• Limited funds<br />

• Limited staff time or expertise<br />

• Length of time allotted to the<br />

program and its evaluation<br />

• Organizational restrictions on hiring<br />

consultants or contractors<br />

• Policies that limit your ability to<br />

collect information from the public<br />

• Difficulty in defining the program’s<br />

objectives or in establishing consensus<br />

on them<br />

• Difficulty in isolating program effects<br />

from other influences on the intended<br />

audience in “real world” situations<br />

• Management perceptions of the<br />

evaluation’s value<br />

These constraints may make the ideal<br />

evaluation impossible. If you must<br />

compromise your evaluation’s design,<br />

data collection, or analysis to fit<br />

limitations, decide whether the<br />

compromises will make the evaluation<br />

results invalid. If your program faces<br />

severe constraints, do a small-scale<br />

evaluation well rather than a large-scale<br />

evaluation poorly. Realize that it is not<br />

sensible to conduct an evaluation if it is<br />

not powerful enough to detect a<br />

statistically significant change.<br />

110 Assessing Effectiveness and Making Refinements