HIGH-PERFORMANCE COMPUTINGPlatform Load Sharing Facility resource managerPlatform LSF is a popular resource manager for clusters. Its focusis to maximize resource utilization within the constraints of localadministration policies. Platform Computing offers two products:Platform LSF and Platform LSF HPC. LSF is designed to handle abroad range of job types such as batch, parallel, distributed, andinteractive. LSF HPC is optimized for HPC parallel applications byproviding additional facilities for intelligent scheduling, which enablesdifferent QoS in different queues. LSF also implements a hierarchicalfair-share scheduling algorithm to balance resources among usersunder all load conditions.Platform LSF has built-in schedulers that implement advancedscheduling algorithms to provide easy configurability and high reliabilityfor users. In addition to basic scheduling algorithms, Platform LSFuses advanced techniques like advance reservation and backfill.Platform LSF and Platform LSF HPC both have a dynamicscheduling decision mechanism. The scheduling decisions underthis mechanism are based on processing load. Based on these decisions,jobs can be migrated among compute nodes or rescheduled.Loads can also be balanced among compute nodes in heterogeneousenvironments. These features make Platform LSF suitable for abroad range of HPC applications. In addition, Platform LSF candynamically migrate jobs among compute nodes. Platform LSF canalso have multiple scheduling algorithms applied to different queuessimultaneously. Platform LSF HPC can make intelligent schedulingdecisions based on the features of advanced interconnect networks,thus enhancing process mapping for parallel applications.The term resourceehas a broad definition in Platform LSF andPlatform LSF HPC. Resources can be CPUs, memory, storage space,or software licenses. (In some sectors, software licenses are expensiveand are considered a valuable resource.)Platform LSF and Platform LSF HPC each have an extensiveadvance reservation system that can reserve different kinds ofresources. In some distributed applications, many instances ofthe same application are required to perform parametric studies.Platform LSF and Platform LSF HPC allow users to submit a jobgroup that can contain a large number of jobs, making parametricstudies much easier to manage.Platform LSF and Platform LSF HPC can interface with externalschedulers such as Maui. External schedulers can complement featuresof the resource manager and enable sophisticated scheduling.For example, using Platform LSF HPC, the hierarchical fair-sharealgorithm can dynamically adjust priorities and feed these prioritiesto Maui for use in scheduling decisions.Maui job schedulerMaui is an advanced open source job scheduler that is specificallydesigned to optimize system utilization in policy-driven, hetero geneousHPC environments. Its focus is on fast turnaround of large parallel jobs,making the Maui scheduler highly suitable for HPC. Maui can workwith several common resource managers including Platform LSF andPlatform LSF HPC, and potentially improve scheduling performancecompared to built-in schedulers.Maui has a two-phase scheduling algorithm. During the firstphase, the high-priority jobs are scheduled using advance reservation.In the second phase, a backfill algorithm is used to schedulelow-priority jobs between previously scheduled jobs. Maui uses thefair-share technique when making scheduling decisions based onjob history. Note: Maui’s internal behavior is based on a single, unifiedqueue. This maximizes the opportunity to utilize resources.Typically, users are guaranteed certain QoS, but Maui givesa significant amount of control to administrators—allowing localpolicies to control access to resources, especially for scheduling.For example, administrators can enable different QoS and accesslevels to users and jobs, which can be preemptively identified. Mauiuses a tool called QBank for allocation management. QBank allowsmultisite control over the use of resources. Another Maui featureallows charge rates (the amount users pay for compute resources)to be based on QoS, resources, and time of day. Maui is scalableto thousands of jobs, despite its nondistributed scheduler daemon,which is centralized and runs on a single node.Maui supports job preemption, which can occur under severalconditions. High-priority jobs can preempt lower-priority or backfilljobs if resources to run the high-priority jobs are not available. Insome cases, resources reserved for high-priority jobs can be used torun low-priority jobs when no high-priority jobs are in the queue.However, when high-priority jobs are submitted, these low-priorityjobs can be preempted to reclaim resources for high-priority jobs.Maui has a simulation mode that can be used to evaluate theeffect of queuing parameters on the scheduler performance. Becauseeach HPC environment has a unique job profile, the parameters ofthe queues and scheduler can be tuned based on historical logs tomaximize scheduler performance.Satisfying ever-increasing computing demandsAs cluster sizes scale to satisfy growing computing needs in variousindustries as well as in academia, advanced schedulers can help maximizeresource utilization and QoS. The profile of jobs, the nature ofcomputation performed by the jobs, and the number of jobs submittedcan help determine the benefits of using advanced schedulers.Saeed Iqbal, Ph.D., is a systems engineer and advisor in the Scalable Systems Group at<strong>Dell</strong>. He has a Ph.D. in Computer Engineering from The University of Texas at Austin.Rinku Gupta is a systems engineer and advisor in the Scalable Systems Group at <strong>Dell</strong>. Shehas a B.E. in Computer Engineering from Mumbai University in India and an M.S. in ComputerInformation Science from The Ohio State University.Yung-Chin Fang is a senior consultant in the Scalable Systems Group at <strong>Dell</strong>. He specializesin cyberinfrastructure management and high-performance computing.136POWER SOLUTIONS Reprinted from <strong>Dell</strong> <strong>Power</strong> <strong>Solutions</strong>, February 2005. Copyright © 2005 <strong>Dell</strong> Inc. All rights reserved. February 2005

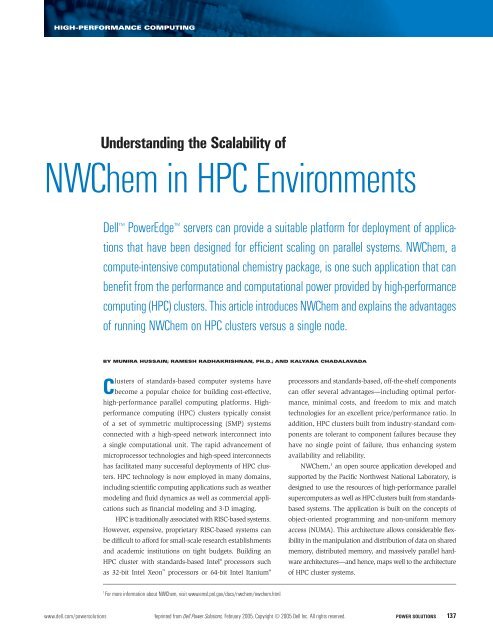

HIGH-PERFORMANCE COMPUTINGUnderstanding the Scalability ofNWChem in HPC Environments<strong>Dell</strong> <strong>Power</strong>Edge servers can provide a suitable platform for deployment of applicationsthat have been designed for efficient scaling on parallel systems. NWChem, acompute-intensive computational chemistry package, is one such application that canbenefit from the performance and computational power provided by high-performancecomputing (HPC) clusters. This article introduces NWChem and explains the advantagesof running NWChem on HPC clusters versus a single node.BY MUNIRA HUSSAIN; RAMESH RADHAKRISHNAN, PH.D.; AND KALYANA CHADALAVADAClusters of standards-based computer systems havebecome a popular choice for building cost-effective,high-performance parallel computing platforms. Highperformancecomputing (HPC) clusters typically consistof a set of symmetric multiprocessing (SMP) systemsconnected with a high-speed network interconnect intoa single computational unit. The rapid advancement ofmicroprocessor technologies and high-speed interconnectshas facilitated many successful deployments of HPC clusters.HPC technology is now employed in many domains,including scientific computing applications such as weathermodeling and fluid dynamics as well as commercial applicationssuch as financial modeling and 3-D imaging.HPC is traditionally associated with RISC-based systems.However, expensive, proprietary RISC-based systems canbe difficult to afford for small-scale research establishmentsand academic institutions on tight budgets. Building anHPC cluster with standards-based Intel ® processors suchas 32-bit Intel Xeon processors or 64-bit Intel Itanium®processors and standards-based, off-the-shelf componentscan offer several advantages—including optimal performance,minimal costs, and freedom to mix and matchtechnologies for an excellent price/performance ratio. Inaddition, HPC clusters built from industry-standard componentsare tolerant to component failures because theyhave no single point of failure, thus enhancing systemavailability and reliability.NWChem, 1 an open source application developed andsupported by the Pacific Northwest National Laboratory, isdesigned to use the resources of high-performance parallelsupercomputers as well as HPC clusters built from standardsbasedsystems. The application is built on the concepts ofobject-oriented programming and non-uniform memoryaccess (NUMA). This architecture allows considerable flexibilityin the manipulation and distribution of data on sharedmemory, distributed memory, and massively parallel hardwarearchitectures—and hence, maps well to the architectureof HPC cluster systems.1For more information about NWChem, visit www.emsl.pnl.gov/docs/nwchem/nwchem.html.www.dell.com/powersolutions Reprinted from <strong>Dell</strong> <strong>Power</strong> <strong>Solutions</strong>, February 2005. Copyright © 2005 <strong>Dell</strong> Inc. All rights reserved. POWER SOLUTIONS 137