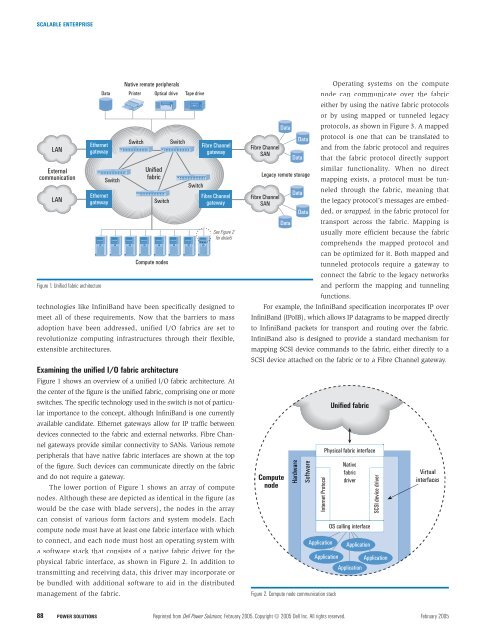

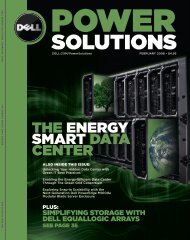

SCALABLE ENTERPRISELANExternalcommunicationLANEthernetgatewayEthernetgatewayFigure 1. Unified fabric architecturetechnologies like InfiniBand have been specifically designed tomeet all of these requirements. Now that the barriers to massadoption have been addressed, unified I/O fabrics are set torevolutionize computing infrastructures through their flexible,extensible architectures.SwitchExamining the unified I/O fabric architectureFigure 1 shows an overview of a unified I/O fabric architecture. Atthe center of the figure is the unified fabric, comprising one or moreswitches. The specific technology used in the switch is not of particularimportance to the concept, although InfiniBand is one currentlyavailable candidate. Ethernet gateways allow for IP traffic betweendevices connected to the fabric and external networks. Fibre Channelgateways provide similar connectivity to SANs. Various remoteperipherals that have native fabric interfaces are shown at the topof the figure. Such devices can communicate directly on the fabricand do not require a gateway.The lower portion of Figure 1 shows an array of computenodes. Although these are depicted as identical in the figure (aswould be the case with blade servers), the nodes in the arraycan consist of various form factors and system models. Eachcompute node must have at least one fabric interface with whichto connect, and each node must host an operating system witha software stack that consists of a native fabric driver for thephysical fabric interface, as shown in Figure 2. In addition totransmitting and receiving data, this driver may incorporate orbe bundled with additional software to aid in the distributedmanagement of the fabric.Native remote peripheralsData Printer Optical drive Tape driveSwitchUnifiedfabricSwitchCompute nodesSwitchFibre ChannelgatewaySwitchFibre ChannelgatewaySee Figure 2for detailsOperating systems on the computenode can communicate over the fabriceither by using the native fabric protocolsor by using mapped or tunneled legacyData protocols, as shown in Figure 3. A mappedData protocol is one that can be translated toFibre Channeland from the fabric protocol and requiresSANData that the fabric protocol directly supportsimilar functionality. When no directLegacy remote storagemapping exists, a protocol must be tunneledthrough the fabric, meaning thatDataFibre ChannelSANthe legacy protocol’s messages are embedded,or wrapped, in the fabric protocol forDataData transport across the fabric. Mapping isusually more efficient because the fabriccomprehends the mapped protocol andcan be optimized for it. Both mapped andtunneled protocols require a gateway toconnect the fabric to the legacy networksand perform the mapping and tunnelingfunctions.For example, the InfiniBand specification incorporates IP overInfiniBand (IPoIB), which allows IP datagrams to be mapped directlyto InfiniBand packets for transport and routing over the fabric.InfiniBand also is designed to provide a standard mechanism formapping SCSI device commands to the fabric, either directly to aSCSI device attached on the fabric or to a Fibre Channel gateway.ComputenodeHardwareSoftwareInternet ProtocolApplicationFigure 2. Compute node communication stackUnified fabricPhysical fabric interfaceNativefabricdriverOS calling interfaceApplicationSCSI device driverApplication ApplicationApplicationVirtualinterfaces88POWER SOLUTIONS Reprinted from <strong>Dell</strong> <strong>Power</strong> <strong>Solutions</strong>, February 2005. Copyright © 2005 <strong>Dell</strong> Inc. All rights reserved. February 2005

SCALABLE ENTERPRISEFabricframeUnified fabricFabricframeUnified fabricParameter AParameter BParameter CParameter AParameter B LegacyframeParameter CFigure 3. Protocol mapping and tunnelingGatewayProtocol mappingGatewayProtocol tunnelingConsidering InfiniBand for the I/O fabricParameter AParameter BParameter CLegacy networkLegacyframeLegacy networkParameter AParameter BParameter CLegacyframeInfiniBand, designed as a next-generation I/O interconnect for internalserver devices, is one viable option for unified I/O fabrics. Thisswitched, point-to-point architecture specifies several types of fabricconnections, allowing compute nodes, I/O controllers, peripherals,and traffic management elements to communicate over a singlehigh-speed fabric. Because it can accommodate several types ofcommunication models, like RDMA and channel I/O, InfiniBandis a compelling candidate for unifying legacy communication technologies.InfiniBand meets most of the suitability requirements for aunified I/O fabric primarily because it was conceived and designedto function as a single, ubiquitous interconnect fabric. 1Transparent coexistence between legacy technologies andunified fabrics is a crucial requirement for fabric technology.InfiniBand is designed to support connections to legacy networksvia target channel adapters (TCAs). TCAs enable legacy I/O protocolsto use InfiniBand networks and vice versa. They support bothmapping and tunneling operations like those shown in Figure 3.Tunneling requires encapsulating the legacy transport and payloadpackets within the InfiniBand architecture packet on the unifiedfabric, allowing the legacy protocol software at each end of theconnection to remain unaltered. For example, a TCP/IP connectionbetween a legacy network node and a unified fabric node cantake place entirely over the TCP/IP stack. The unified fabric nodeneeds the InfiniBand software only to remove the TCP/IP packetfrom the InfiniBand packet. Mapping, or transport offloading,actuallyremoves the IP information from the InfiniBand packet andtransmits it natively on the IP network. The unified fabric nodecan take full advantage of the InfiniBand transport features, butthe TCA must translate between InfiniBand packets and IP packets—apotential bottleneck. Although InfiniBand was designedwith transparency in mind, the performance and benefits of thesemethods will ultimately depend on the design and implementationof TCA solutions.The high bandwidth of InfiniBand makes it possible for severallegacy protocols to coexist on the same unified connection. InfiniBandcurrently supports multiple levels of bandwidth. Single-link widths,also called 1X widths, support a bidirectional transfer rate of up to500 MB/sec. Two other link widths, 4X and 12X, support rates ofup to 2 GB/sec and 6 GB/sec, respectively. Compared with today’sspeeds of up to 125 MB/sec (1 Gbps) for Ethernet and 250 MB/sec(2 Gbps) for Fibre Channel connections, InfiniBand bandwidth hasenough capacity to support both types of traffic through a singleconnection. However, as legacy network bandwidth improves, unifiedfabric solutions must scale to meet the enhanced speeds oftraditional I/O networks. InfiniBand must increase its bandwidthor unified I/O solutions must use multiple InfiniBand connectionsto garner a higher aggregate bandwidth.As with other packet-based protocols, InfiniBand employssafety measures to help ensure proper disassembly, transmission,and reassembly of transported data. This can be considered faulttolerance in its most basic form. Furthermore, just as other protocolsspecify fault tolerance between connection ports, InfiniBandInfiniBand meets mostof the suitability requirementsfor a unified I/O fabricprimarily because it wasconceived and designedto function as a single,ubiquitous interconnect fabric.allows for two ports on thesame channel adapter tobe used in a fault-tolerantconfiguration. Therefore,InfiniBand can help enablea unified fabric solution toimplement fault toleranceand failover features. Faulttolerance at the legacy technologylayer, however, muststill be provided by legacysoftware stacks. If a TCAis used to connect a legacynetwork to the InfiniBandfabric, the unified fabricsolution must provide fault-tolerant links between the two.Again, the performance and benefits of internetwork fault tolerancewill depend on the design and implementation of unifiedfabric solutions.InfiniBand was designed with a goal of multiple physicalcomputing devices existing on the same network. Different physicalconnections might be necessary for different elements ofthe unified network, and different I/O access models might be1 For more information, see www.infinibandta.org/ibta.www.dell.com/powersolutions Reprinted from <strong>Dell</strong> <strong>Power</strong> <strong>Solutions</strong>, February 2005. Copyright © 2005 <strong>Dell</strong> Inc. All rights reserved. POWER SOLUTIONS 89