- Page 1 and 2:

VYSOKÉ UČENÍ TECHNICKÉ V BRNĚ

- Page 3 and 4:

Abstrakt Statistické jazykové mod

- Page 5 and 6:

Contents 1 Introduction 4 1.1 Motiv

- Page 7 and 8:

6.2.3 Reduction of Vocabulary Size

- Page 9 and 10:

Maybe the most popular vision of fu

- Page 11 and 12:

Chapter 6 presents further extensio

- Page 13 and 14:

Chapter 2 Overview of Stati

- Page 15 and 16:

2.1 Evaluation 2.1.1 Perplexity Eva

- Page 17 and 18:

ALP can be used to obtain prior pro

- Page 19 and 20:

• Good theoretical motivation •

- Page 21 and 22:

abilities of n-grams are stored in

- Page 23 and 24:

main (static) n-gram model. As the

- Page 25 and 26:

There are many popular examples sho

- Page 27 and 28:

y Chen et al., who proposed a so-ca

- Page 29 and 30:

confusion among researchers, and ma

- Page 31 and 32:

language model took almost a week u

- Page 33 and 34:

w(t) s(t-1) s(t) U V W y(t) Figure

- Page 35 and 36:

ate is halved at start of every new

- Page 37 and 38:

or using matrix-vector notation as

- Page 39 and 40:

information for more than 5 time st

- Page 41 and 42:

A simple solution to the exploding

- Page 43 and 44:

output layer changes to computation

- Page 45 and 46:

While RNN models can overcome this

- Page 47 and 48:

complex or random architectures (su

- Page 49 and 50:

While for any of the previous point

- Page 51 and 52:

where λ is the interpolation weigh

- Page 53 and 54:

model with default SRILM cutoffs pr

- Page 55 and 56:

experiments, we have used the one i

- Page 57 and 58:

Perplexity (Penn corpus) 145 140 13

- Page 59 and 60:

with syntactical NNLMs would be pre

- Page 61 and 62:

Table 4.3: Combination of individua

- Page 63 and 64:

Table 4.6: Results on Penn Treebank

- Page 65 and 66:

4.6 Conclusion of the Model Combina

- Page 67 and 68:

were: 400 classes, hidden layer siz

- Page 69 and 70:

Entropy per word on the WSJ test da

- Page 71 and 72:

Table 5.3: Results on the WSJ setup

- Page 73 and 74:

Table 5.5: Results for models <stro

- Page 75 and 76:

trained together with a maximum ent

- Page 77 and 78:

wt-3 wt-2 wt-1 D D D P(wt|context)

- Page 79 and 80:

n-gram probabilities. However, it w

- Page 81 and 82: Table 6.1: Training corpora for NIS

- Page 83 and 84: Perplexity 360 340 320 300 280 260

- Page 85 and 86: Entropy per word 9 8.5 8 7.5 7 6.5

- Page 87 and 88: 1 a a a 1 2 3 P(w(t)|*) ONE TWO THR

- Page 89 and 90: Table 6.4: Perplexity on the evalua

- Page 91 and 92: Entropy reduction per word over KN4

- Page 93 and 94: Table 6.6: Perplexity with the new

- Page 95 and 96: Entropy reduction over KN5 -0.04 -0

- Page 97 and 98: as a baseline, and 12.3% after resc

- Page 99 and 100: Table 7.1: BLEU on IWSLT 2005 Machi

- Page 101 and 102: Table 7.3: Size of compressed text

- Page 103 and 104: Table 7.4: Accuracy of different la

- Page 105 and 106: Table 7.6: Entropy on PTB with n-gr

- Page 107 and 108: 8.1 Machine Learning One possible d

- Page 109 and 110: that almost every non-trivial compu

- Page 111 and 112: supervision such as one digit at a

- Page 113 and 114: Chapter 9 Conclusion and Future Wor

- Page 115 and 116: from the expensive part of the mode

- Page 117 and 118: Bibliography [1] A. Alexandrescu, K

- Page 119 and 120: [23] D. Filimonov, M. Harper. A joi

- Page 121 and 122: [50] T. Mikolov, S. Kombrink, L. Bu

- Page 123 and 124: [77] W. Wang, M. Harper. The SuperA

- Page 125 and 126: Test Phase After the model is train

- Page 127 and 128: • compute sentence-level scores g

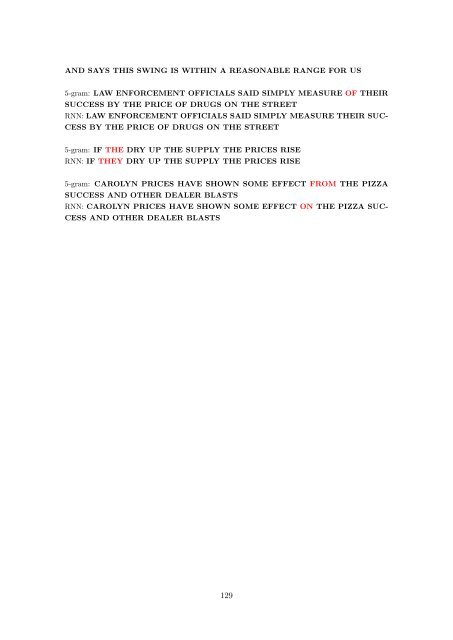

- Page 129 and 130: Appendix B: Data generated from mod

- Page 131: Appendix C: Example of decoded utte