Onto.PT: Towards the Automatic Construction of a Lexical Ontology ...

Onto.PT: Towards the Automatic Construction of a Lexical Ontology ...

Onto.PT: Towards the Automatic Construction of a Lexical Ontology ...

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

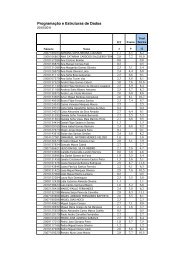

7.2. <strong>Onto</strong>logising performance 123<br />

Table 7.2 presents <strong>the</strong> scores obtained for each measure, according to algorithm<br />

and relation type. In RP and RP+AC, <strong>the</strong> threshold θ was empirically set to 0 and<br />

0.55, respectively. In NT+AC, θ was set to 3.<br />

Algorithm<br />

Hypernym-<strong>of</strong> (210 tb-triples)<br />

Precision (%) Recall (%) F1(%) F0.5(%) RF1(%)<br />

RP 53.8 12.4 20.2 32.3 50.3<br />

AC 60.1 15.7 24.9 38.4 59.8<br />

RP+AC 58.5 15.6 24.6 37.7 58.5<br />

NT 57.7 17.3 26.6 39.4 57.7<br />

NT+AC 58.7 15.3 24.3 37.4 58.6<br />

PR 46.2 11.5 18.5 28.9 45.7<br />

MD 58.6 15.8 24.9 38.0 58.6<br />

Part-<strong>of</strong> (175 tb-triples)<br />

Precision (%) Recall (%) F1(%) F0.5(%) RF1(%)<br />

RP 56.9 10.6 17.9 30.4 47.0<br />

AC 58.7 14.9 23.8 37.0 58.7<br />

RP+AC 64.1 16.6 26.3 40.7 64.1<br />

NT 50.7 15.8 24.1 35.2 50.7<br />

NT+AC 59.2 15.2 24.2 37.5 59.2<br />

PR 50.6 12.6 20.2 31.6 49.9<br />

MD 59.1 15.3 24.4 37.6 59.1<br />

Purpose-<strong>of</strong> (67 tb-triples)<br />

Precision (%) Recall (%) F1(%) F0.5(%) RF1(%)<br />

RP 51.5 5.1 9.3 18.3 32.6<br />

AC 63.2 13.0 21.5 35.6 63.2<br />

RP+AC 63.4 13.6 22.3 36.5 63.4<br />

NT 48.1 15.4 23.3 33.7 48.1<br />

NT+AC 62.2 13.9 22.7 36.6 62.2<br />

PR 56.3 10.8 18.2 30.6 56.3<br />

MD 60.9 12.7 20.9 34.5 60.9<br />

Table 7.2: <strong>Onto</strong>logising algorithms performance results.<br />

The comparison shows that <strong>the</strong> best performing algorithms for hypernymy are<br />

AC and NT, which have close F1 and RF1. NT is more likely to originate ties<br />

for <strong>the</strong> best attachments than AC, and thus to have higher recall. However, its<br />

precision is lower than AC’s. For part-<strong>of</strong>, RP+AC is clearly <strong>the</strong> best performing<br />

algorithm. For purpose-<strong>of</strong>, RP+AC has also <strong>the</strong> best precision and RF1, but its<br />

scores are very close to AC. Moreover, it is outperformed by NT and NT+AC in<br />

<strong>the</strong> o<strong>the</strong>r measures. Once again, NT has higher recall and thus higher F1. NT+AC<br />

combines good precision and recall in an interesting way and has <strong>the</strong>refore <strong>the</strong> best<br />

F0.5. However, as that <strong>the</strong> set <strong>of</strong> purpose-<strong>of</strong> tb-triples contains only 67 instances, <strong>the</strong><br />

results for this relation might not be significant enough to take strong conclusions.<br />

These results show as well that PR has <strong>the</strong> worst performance for hypernymy<br />

and part-<strong>of</strong> tb-triples, which suggests that PageRank is not adequate for this task.<br />

For purpose-<strong>of</strong>, RP is <strong>the</strong> worst algorithm, especially due to <strong>the</strong> low recall.<br />

7.2.3 Performance against an existing gold standard<br />

In <strong>the</strong> second performance evaluation, we used <strong>the</strong> proposed algorithms to ontologise<br />

antonymy relations. For this purpose, <strong>the</strong> antonymy sb-triples <strong>of</strong> TeP were converted<br />

to tb-triples. This resulted in 46,339 unique antonymy pairs – 7,633 between nouns,