Onto.PT: Towards the Automatic Construction of a Lexical Ontology ...

Onto.PT: Towards the Automatic Construction of a Lexical Ontology ...

Onto.PT: Towards the Automatic Construction of a Lexical Ontology ...

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

154 Chapter 8. <strong>Onto</strong>.<strong>PT</strong>: a lexical ontology for Portuguese<br />

1. POS-tag <strong>the</strong> original sentence, with <strong>the</strong> correct answer in <strong>the</strong> blank, so that it<br />

is coherent. After tagging, remove <strong>the</strong> answer from <strong>the</strong> sentence.<br />

2. Run <strong>the</strong> Personalized PageRank WSD algorithm (see section 8.4.2) using all<br />

content words <strong>of</strong> <strong>the</strong> sentence as context. This means that <strong>the</strong> initial weights<br />

are uniformly distributed to all synsets with, at least, one context word. The<br />

rest <strong>of</strong> <strong>the</strong> nodes have initial weights = 0.<br />

3. For each alternative answer, retrieve all candidate synsets.<br />

4. Select <strong>the</strong> alternative in <strong>the</strong> highest ranked synset.<br />

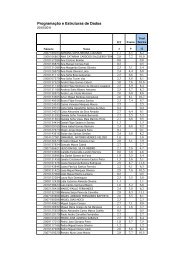

The first algorithm was run for answering <strong>the</strong> 3,900 questions with <strong>the</strong> help <strong>of</strong><br />

CARTÃO, and also using, independently, <strong>the</strong> lexical networks that this resource<br />

merges – those extracted from DLP (PAPEL), Dicionário Aberto (DA), and Wiktionary.<strong>PT</strong>.<br />

The second algorithm was used for answering <strong>the</strong> questions with <strong>the</strong><br />

help <strong>of</strong> <strong>Onto</strong>.<strong>PT</strong>. Table 8.8 shows <strong>the</strong> accuracy values obtained.<br />

Resource Nodes Accuracy<br />

CARTÃO lexical items 41.8%<br />

PAPEL 3.0 lexical items 39.8%<br />

DA lexical items 36.6%<br />

Wiktionary.<strong>PT</strong> lexical items 35.8%<br />

<strong>Onto</strong>.<strong>PT</strong> synsets 37.6%<br />

Table 8.8: Accuracy on answering cloze questions.<br />

These results are clearly higher than <strong>the</strong> random selection, which is 25%, because<br />

<strong>the</strong>re are four possibilities for each question. We should add that our approach is<br />

not very sophisticated, and takes advantage only <strong>of</strong> <strong>the</strong> question’s context and <strong>of</strong><br />

<strong>the</strong> LKBs. But <strong>the</strong>re are a few questions with a short context – 355 have less than 8<br />

context words – as well as several questions with named entities – 1,752 contain at<br />

least one capitalised word – which are not expected to be found in a LKB. Figure 8.7<br />

is an example <strong>of</strong> a question with a named entity (with three tokens) and context<br />

<strong>of</strong> five words, which was not answered correctly using any <strong>of</strong> <strong>the</strong> resources. On <strong>the</strong><br />

o<strong>the</strong>r hand, figure 8.8 shows a question that was answered correctly using each <strong>of</strong><br />

<strong>the</strong> resources.<br />

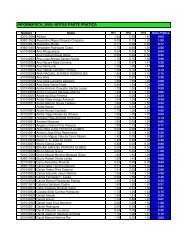

Mercedes Classe C carácter desportivo.<br />

(a) reforça (c) desloca<br />

(b) defende (d) implica<br />

Figure 8.7: Cloze question not answered correctly, using any <strong>of</strong> <strong>the</strong> resources.<br />

Despite <strong>the</strong> poor accuracy <strong>of</strong> <strong>the</strong> results obtained, this exercise showed that <strong>the</strong><br />

organisation <strong>of</strong> <strong>the</strong> used LKBs makes sense, and that <strong>the</strong>se resources can be exploited