References 130W. W. Stroup, P. S. Baenziger, and D. K. Mulitze. Removing spatial variation from wheat yieldtrials: a comparison of methods. Crop Science, 86:62–66, 1994.R. Thompson. Maximum likelihood estimation of variance components. Math. OperationsforschStatistics, Series, Statistics, 11:545–561, 1980.R. Thompson, B.R. Cullis, A. Smith, and A.R. Gilmour. A sparse implementation of the averageinformation algorithm for factor analytic and reduced rank variance models. Australian andNew Zealand Journal of Statistics, 45:445–459, 2003.A. P. Verbyla. A conditional derivation of residual maximum likelihood. Australian Journal ofStatistics, 32:227–230, 1990.A. P. Verbyla, B. R. Cullis, M. G. Kenward, and S. J. Welham. The analysis of designedexperiments and longitudinal data by using smoothing splines (with discussion). AppliedStatistics, 48:269–311, 1999.D. Waddington, S. J. Welham, A. R. Gilmour, and R. Thompson. Comparisons of some glmmestimators for a simple binomial model. Genstat Newsletter, 30:13–24, 1994.R. Webster and M. A. Oliver. Geostatistics for Environmental Scientists. John Wiley and Sons,Chichester, 2001.S. J. Welham. Glmm fits a generalized linear mixed model. In R.W. Payne and P.W. Lane,editors, GenStat Reference Manual 3: Procedure Library PL17,, pages 260–265. <strong>VSN</strong> <strong>International</strong>,Hemel Hempstead, UK, 2005.S. J. Welham and R. Thompson. Adjusted likelihood ratio tests for fixed effects. Journal of theRoyal Statistical Society, Series B, 59:701–714, 1997.S. J. Welham, B. R. Cullis, B. J. Gogel, A. R. Gilmour, and R. Thompson. Prediction in linearmixed models. Australian and New Zealand Journal of Statistics, 46:325–347, 2004.R. D. Wolfinger. Heterogeneous variance-covariance structures for repeated measures. Journalof Agricultural, Biological, and Environmental Statistics, 1:362–389, 1996.Russ Wolfinger and Michael O’Connell. Generalized linear mixed models: A pseudo-likelihoodapproach. Journal of Statistical Computation and Simulation, 48:233–243, 1993.

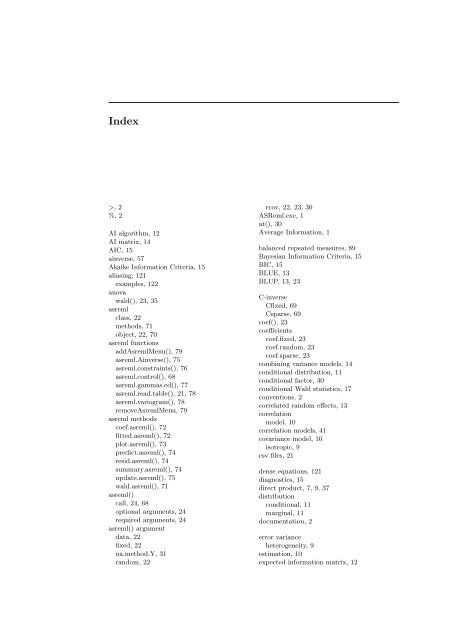

Index>, 2%, 2AI algorithm, 12AI matrix, 14AIC, 15ainverse, 57Akaike Information Criteria, 15aliasing, 121examples, 122anovawald(), 23, 35asremlclass, 22methods, 71object, 22, 70asreml functionsaddAsremlMenu(), 79asreml.Ainverse(), 75asreml.constraints(), 76asreml.control(), 68asreml.gammas.ed(), 77asreml.read.table(), 21, 78asreml.variogram(), 78removeAsremlMenu, 79asreml methodscoef.asreml(), 72fitted.asreml(), 72plot.asreml(), 73predict.asreml(), 74resid.asreml(), 74summary.asreml(), 74update.asreml(), 75wald.asreml(), 71asreml()call, 24, 68optional arguments, 24required arguments, 24asreml() argumentdata, 22fixed, 22na.method.Y, 31random, 22rcov, 22, 23, 30<strong>ASReml</strong>.exe, 1at(), 30Average Information, 1balanced repeated measures, 89Bayesian Information Criteria, 15BIC, 15BLUE, 13BLUP, 13, 23C-inverseCfixed, 69Csparse, 69coef(), 23coefficientscoef.fixed, 23coef.random, 23coef.sparse, 23combining variance models, 14conditional distribution, 11conditional factor, 30conditional Wald statistics, 17conventions, 2correlated random effects, 13correlationmodel, 10correlation models, 41covariance model, 10isotropic, 9csv files, 21dense equations, 121diagnostics, 15direct product, 7, 9, 37distributionconditional, 11marginal, 11documentation, 2error varianceheterogeneity, 9estimation, 10expected information matrix, 12