Dictionary of Evidence-based Medicine.pdf

Dictionary of Evidence-based Medicine.pdf

Dictionary of Evidence-based Medicine.pdf

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

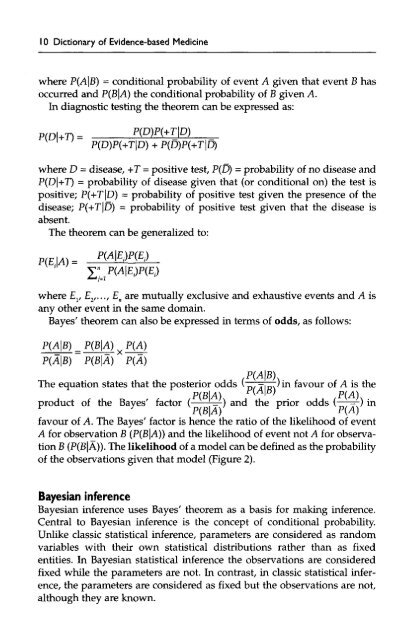

10 <strong>Dictionary</strong> <strong>of</strong> <strong>Evidence</strong>-<strong>based</strong> <strong>Medicine</strong><br />

where P(A|B) = conditional probability <strong>of</strong> event A given that event B has<br />

occurred and P(B|A) the conditional probability <strong>of</strong> B given A.<br />

In diagnostic testing the theorem can be expressed as:<br />

where D = disease, +T = positive test, P(D) = probability <strong>of</strong> no disease and<br />

P(D|+T) = probability <strong>of</strong> disease given that (or conditional on) the test is<br />

positive; P(+T|D) = probability <strong>of</strong> positive test given the presence <strong>of</strong> the<br />

disease; P(+T|D) = probability <strong>of</strong> positive test given that the disease is<br />

absent.<br />

The theorem can be generalized to:<br />

where E v E 2 ,...,E n are mutually exclusive and exhaustive events and A is<br />

ny other event in the same domain.<br />

Bayes' theorem can also be expressed in terms <strong>of</strong> odds, as follows:<br />

The equation states that the posterior odds m)in favour <strong>of</strong> A is the<br />

P(B|A) ^ ' ' P(A)<br />

product <strong>of</strong> the Bayes' factor ( -=^) and the prior odds ( ,-) in<br />

F<br />

y<br />

F<br />

P(B|A)<br />

P(A)<br />

favour <strong>of</strong> A. The Bayes' factor is hence the ratio <strong>of</strong> the likelihood <strong>of</strong> event<br />

A for observation B (P(B|A)) and the likelihood <strong>of</strong> event not A for observation<br />

B (P(B|A)). The likelihood <strong>of</strong> a model can be defined as the probability<br />

<strong>of</strong> the observations given that model (Figure 2).<br />

Bayesian inference<br />

Bayesian inference uses Bayes' theorem as a basis for making inference.<br />

Central to Bayesian inference is the concept <strong>of</strong> conditional probability.<br />

Unlike classic statistical inference, parameters are considered as random<br />

variables with their own statistical distributions rather than as fixed<br />

entities. In Bayesian statistical inference the observations are considered<br />

fixed while the parameters are not. In contrast, in classic statistical inference,<br />

the parameters are considered as fixed but the observations are not,<br />

although they are known.