Mathematics in Independent Component Analysis

Mathematics in Independent Component Analysis

Mathematics in Independent Component Analysis

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

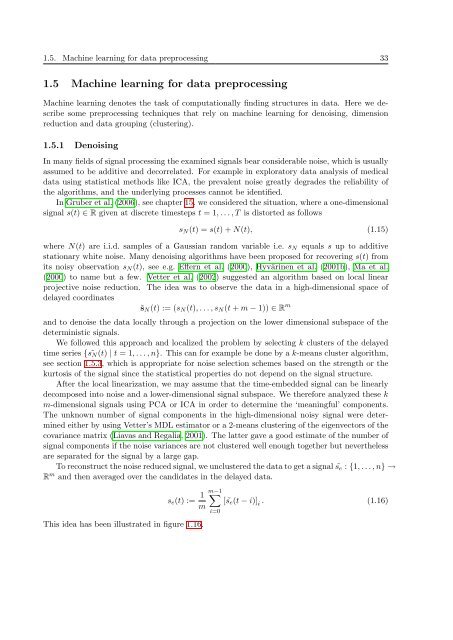

1.5. Mach<strong>in</strong>e learn<strong>in</strong>g for data preprocess<strong>in</strong>g 33<br />

1.5 Mach<strong>in</strong>e learn<strong>in</strong>g for data preprocess<strong>in</strong>g<br />

Mach<strong>in</strong>e learn<strong>in</strong>g denotes the task of computationally f<strong>in</strong>d<strong>in</strong>g structures <strong>in</strong> data. Here we describe<br />

some preprocess<strong>in</strong>g techniques that rely on mach<strong>in</strong>e learn<strong>in</strong>g for denois<strong>in</strong>g, dimension<br />

reduction and data group<strong>in</strong>g (cluster<strong>in</strong>g).<br />

1.5.1 Denois<strong>in</strong>g<br />

In many fields of signal process<strong>in</strong>g the exam<strong>in</strong>ed signals bear considerable noise, which is usually<br />

assumed to be additive and decorrelated. For example <strong>in</strong> exploratory data analysis of medical<br />

data us<strong>in</strong>g statistical methods like ICA, the prevalent noise greatly degrades the reliability of<br />

the algorithms, and the underly<strong>in</strong>g processes cannot be identified.<br />

In Gruber et al. (2006), see chapter 15, we considered the situation, where a one-dimensional<br />

signal s(t) ∈ R given at discrete timesteps t = 1, . . . , T is distorted as follows<br />

sN(t) = s(t) + N(t), (1.15)<br />

where N(t) are i.i.d. samples of a Gaussian random variable i.e. sN equals s up to additive<br />

stationary white noise. Many denois<strong>in</strong>g algorithms have been proposed for recover<strong>in</strong>g s(t) from<br />

its noisy observation sN(t), see e.g. Effern et al. (2000), Hyvär<strong>in</strong>en et al. (2001b), Ma et al.<br />

(2000) to name but a few. Vetter et al. (2002) suggested an algorithm based on local l<strong>in</strong>ear<br />

projective noise reduction. The idea was to observe the data <strong>in</strong> a high-dimensional space of<br />

delayed coord<strong>in</strong>ates<br />

˜sN(t) := (sN(t), . . . , sN(t + m − 1)) ∈ R m<br />

and to denoise the data locally through a projection on the lower dimensional subspace of the<br />

determ<strong>in</strong>istic signals.<br />

We followed this approach and localized the problem by select<strong>in</strong>g k clusters of the delayed<br />

time series { sN(t) ˜ | t = 1, . . . , n}. This can for example be done by a k-means cluster algorithm,<br />

see section 1.5.3, which is appropriate for noise selection schemes based on the strength or the<br />

kurtosis of the signal s<strong>in</strong>ce the statistical properties do not depend on the signal structure.<br />

After the local l<strong>in</strong>earization, we may assume that the time-embedded signal can be l<strong>in</strong>early<br />

decomposed <strong>in</strong>to noise and a lower-dimensional signal subspace. We therefore analyzed these k<br />

m-dimensional signals us<strong>in</strong>g PCA or ICA <strong>in</strong> order to determ<strong>in</strong>e the ‘mean<strong>in</strong>gful’ components.<br />

The unknown number of signal components <strong>in</strong> the high-dimensional noisy signal were determ<strong>in</strong>ed<br />

either by us<strong>in</strong>g Vetter’s MDL estimator or a 2-means cluster<strong>in</strong>g of the eigenvectors of the<br />

covariance matrix (Liavas and Regalia, 2001). The latter gave a good estimate of the number of<br />

signal components if the noise variances are not clustered well enough together but nevertheless<br />

are separated for the signal by a large gap.<br />

To reconstruct the noise reduced signal, we unclustered the data to get a signal ˜se : {1, . . . , n} →<br />

Rm and then averaged over the candidates <strong>in</strong> the delayed data.<br />

This idea has been illustrated <strong>in</strong> figure 1.16.<br />

se(t) := 1<br />

m−1 �<br />

[ ˜se(t − i)] i . (1.16)<br />

m<br />

i=0