Superconducting Technology Assessment - nitrd

Superconducting Technology Assessment - nitrd

Superconducting Technology Assessment - nitrd

You also want an ePaper? Increase the reach of your titles

YUMPU automatically turns print PDFs into web optimized ePapers that Google loves.

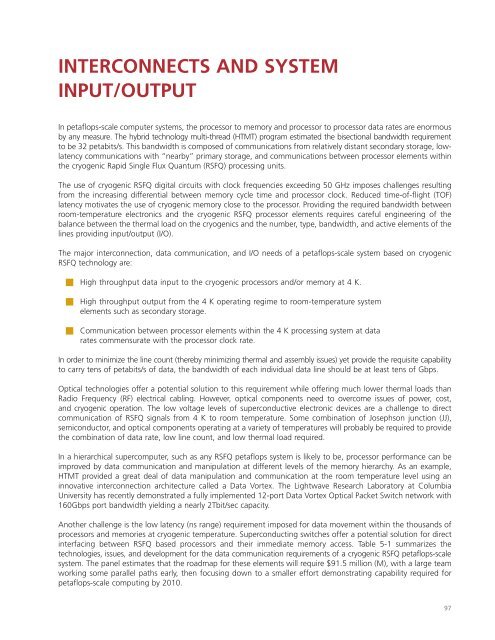

INTERCONNECTS AND SYSTEM<br />

INPUT/OUTPUT<br />

In petaflops-scale computer systems, the processor to memory and processor to processor data rates are enormous<br />

by any measure. The hybrid technology multi-thread (HTMT) program estimated the bisectional bandwidth requirement<br />

to be 32 petabits/s. This bandwidth is composed of communications from relatively distant secondary storage, lowlatency<br />

communications with “nearby” primary storage, and communications between processor elements within<br />

the cryogenic Rapid Single Flux Quantum (RSFQ) processing units.<br />

The use of cryogenic RSFQ digital circuits with clock frequencies exceeding 50 GHz imposes challenges resulting<br />

from the increasing differential between memory cycle time and processor clock. Reduced time-of-flight (TOF)<br />

latency motivates the use of cryogenic memory close to the processor. Providing the required bandwidth between<br />

room-temperature electronics and the cryogenic RSFQ processor elements requires careful engineering of the<br />

balance between the thermal load on the cryogenics and the number, type, bandwidth, and active elements of the<br />

lines providing input/output (I/O).<br />

The major interconnection, data communication, and I/O needs of a petaflops-scale system based on cryogenic<br />

RSFQ technology are:<br />

■ High throughput data input to the cryogenic processors and/or memory at 4 K.<br />

■ High throughput output from the 4 K operating regime to room-temperature system<br />

elements such as secondary storage.<br />

■ Communication between processor elements within the 4 K processing system at data<br />

rates commensurate with the processor clock rate.<br />

In order to minimize the line count (thereby minimizing thermal and assembly issues) yet provide the requisite capability<br />

to carry tens of petabits/s of data, the bandwidth of each individual data line should be at least tens of Gbps.<br />

Optical technologies offer a potential solution to this requirement while offering much lower thermal loads than<br />

Radio Frequency (RF) electrical cabling. However, optical components need to overcome issues of power, cost,<br />

and cryogenic operation. The low voltage levels of superconductive electronic devices are a challenge to direct<br />

communication of RSFQ signals from 4 K to room temperature. Some combination of Josephson junction (JJ),<br />

semiconductor, and optical components operating at a variety of temperatures will probably be required to provide<br />

the combination of data rate, low line count, and low thermal load required.<br />

In a hierarchical supercomputer, such as any RSFQ petaflops system is likely to be, processor performance can be<br />

improved by data communication and manipulation at different levels of the memory hierarchy. As an example,<br />

HTMT provided a great deal of data manipulation and communication at the room temperature level using an<br />

innovative interconnection architecture called a Data Vortex. The Lightwave Research Laboratory at Columbia<br />

University has recently demonstrated a fully implemented 12-port Data Vortex Optical Packet Switch network with<br />

160Gbps port bandwidth yielding a nearly 2Tbit/sec capacity.<br />

Another challenge is the low latency (ns range) requirement imposed for data movement within the thousands of<br />

processors and memories at cryogenic temperature. <strong>Superconducting</strong> switches offer a potential solution for direct<br />

interfacing between RSFQ based processors and their immediate memory access. Table 5-1 summarizes the<br />

technologies, issues, and development for the data communication requirements of a cryogenic RSFQ petaflops-scale<br />

system. The panel estimates that the roadmap for these elements will require $91.5 million (M), with a large team<br />

working some parallel paths early, then focusing down to a smaller effort demonstrating capability required for<br />

petaflops-scale computing by 2010.<br />

97