Numerical Methods Contents - SAM

Numerical Methods Contents - SAM

Numerical Methods Contents - SAM

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

1.3 Complexity/computational effort<br />

complexity/computational effort of an algorithm :⇔ number of elementary operators<br />

additions/multiplications<br />

Crucial: dependence of (worst case) complexity of an algorithm on (integer) problem size parameters<br />

(worst case ↔ maximum for all possible data)<br />

Usually studied: asymptotic complexity ˆ= “leading order term” of complexity w.r.t large problem<br />

size parameters<br />

The usual choice of problem size parameters in numerical linear algebra is the number of independent<br />

real variables needed to describe the input data (vector length, matrix sizes).<br />

Q 2 = A 11 ∗ (B 12 − B 22 ) ,<br />

Q 3 = A 22 ∗ (−B 11 + B 21 ) ,<br />

Q 4 = (A 11 + A 12 ) ∗ B 22 ,<br />

Q 5 = (−A 11 + A 21 ) ∗ (B 11 + B 12 ) ,<br />

Q 6 = (A 12 − A 22 ) ∗ (B 21 + B 22 ) .<br />

Beside a considerable number of matrix additions ( computational effort O(n 2 ) ) it takes only 7 multiplications<br />

of matrices of size n/2 to compute C! Strassen’s algorithm boils down to the recursive<br />

application of these formulas for n = 2 k , k ∈ N.<br />

A refined algorithm of this type can achieve complexity O(n 2.36 ), see [7].<br />

△<br />

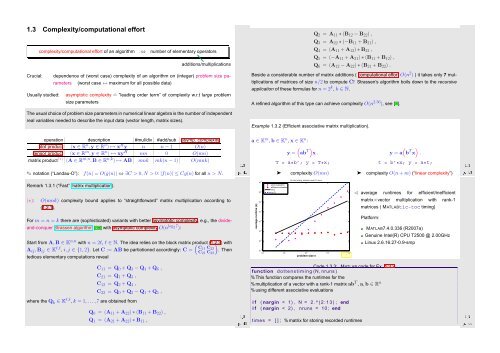

Example 1.3.2 (Efficient associative matrix multiplication).<br />

operation description #mul/div #add/sub asymp. complexity<br />

dot product (x ∈ R n ,y ∈ R n ) ↦→ x H y n n − 1 O(n)<br />

tensor product (x ∈ R m ,y ∈ R n ) ↦→ xy H nm 0 O(mn)<br />

Ôº½ ½º¿<br />

matrix product (∗) (A ∈ R m,n ,B ∈ R n,k ) ↦→ AB mnk mk(n − 1) O(mnk)<br />

✎ notation (“Landau-O”): f(n) = O(g(n)) ⇔ ∃C > 0, N > 0: |f(n)| ≤ Cg(n) for all n > N.<br />

Remark 1.3.1 (“Fast” matrix multiplication).<br />

(∗):<br />

O(mnk) complexity bound applies to “straightforward” matrix multiplication according to<br />

(1.2.1).<br />

For m = n = k there are (sophisticated) variants with better asymptotic complexity, e.g., the divideand-conquer<br />

Strassen algorithm [39] with asymptotic complexity O(n log 2 7 ):<br />

Start from A,B ∈ K n,n with n = 2l, l ∈ N. The idea relies on the block matrix product ( (1.2.3) with<br />

A ij ,B ij ∈ K l,l , i,j ∈ {1, 2}. Let C := AB be partiotioned accordingly: C = C11 C 22<br />

C 21 C<br />

). Then 22<br />

tedious elementary computations reveal<br />

a ∈ K m , b ∈ K n , x ∈ K n :<br />

(<br />

y = ab T) x .<br />

average runtime (s)<br />

T = a*b’; y = T*x;<br />

➤<br />

complexity O(mn)<br />

tic−toc timing, mininum over 10 runs<br />

slow evaluation<br />

efficient evaluation<br />

O(n)<br />

10 0<br />

O(n 2 )<br />

10 −1<br />

10 −2<br />

10 −3<br />

10 −4<br />

10 −5<br />

10 −6<br />

10 0 10 1 10 2 10 3 10 4<br />

problem size n<br />

Fig. 1<br />

10 1<br />

Ôº¿ ½º¿<br />

➤ complexity O(n + m) (“linear complexity”)<br />

( )<br />

y = a b T x .<br />

t = b’*x; y = a*t;<br />

✁ average runtimes for efficient/inefficient<br />

matrix×vector multiplication with rank-1<br />

matrices ( MATLABtic-toc timing)<br />

Platform:<br />

MATLAB7.4.0.336 (R2007a)<br />

Genuine Intel(R) CPU T2500 @ 2.00GHz<br />

Linux 2.6.16.27-0.9-smp<br />

C 11 = Q 0 + Q 3 − Q 4 + Q 6 ,<br />

C 21 = Q 1 + Q 3 ,<br />

C 12 = Q 2 + Q 4 ,<br />

C 22 = Q 0 + Q 2 − Q 1 + Q 5 ,<br />

where the Q k ∈ K l,l , k = 1,...,7 are obtained from<br />

Q 0 = (A 11 + A 22 ) ∗ (B 11 + B 22 ) ,<br />

Q 1 = (A 21 + A 22 ) ∗ B 11 ,<br />

Ôº¾ ½º¿<br />

Code 1.3.3: MATLAB code for Ex. 1.3.2<br />

1 function d o t t e n s t i m i n g (N, nruns )<br />

2 % This function compares the runtimes for the<br />

3 % multiplication of a vector with a rank-1 matrix ab T , a,b ∈ R n<br />

4 % using different associative evaluations<br />

5<br />

6 i f ( nargin < 1) , N = 2 . ^ ( 2 : 1 3 ) ; end<br />

Ôº ½º¿<br />

7 i f ( nargin < 2) , nruns = 10; end<br />

8<br />

9 times = [ ] ; % matrix for storing recorded runtimes