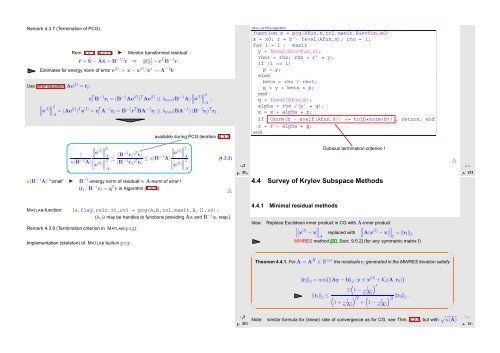

Remark 4.3.7 (Termination of PCG). Rem. 4.2.3, (4.2.11) ➤ Monitor transformed residual ˜r = ˜b − Øx = B−1/2 r ⇒ ‖˜r‖ 2 2 = rT B −1 r . Estimates for energy norm of error e (l) := x − x (l) , x ∗ := A −1 b Use error equation Ae (l) = r l : r T l B−1 r l = (B −1 Ae (l) ) T Ae (l) ≤ λ max (B −1 ∥ A) ∥e (l)∥ ∥2 , A ∥ ∥e (l)∥ ∥2 = A (Ae(l) ) T e (l) = r T l A−1 r l = B −1 r T BA −1 r l ≤ λ max (BA −1 ) (B −1 r l ) T r l . available during PCG iteration (4.3.2) MATLAB PCG algorithm function x = pcg(Afun,b,tol,maxit,Binvfun,x0) x = x0; r = b - feval(Afun,x); rho = 1; for i = 1 : maxit y = feval(Binvfun,r); rho1 = rho; rho = r’ * y; if (i == 1) p = y; else beta = rho / rho1; p = y + beta * p; end q = feval(Afun,p); alpha = rho /(p’ * q); x = x + alpha * p; if (norm(b - evalf(Afun,x))

eplaced with κ(A) ! 4.4.2 Iterations with short recursions Iterative solver for Ax = b with symmetric system matrix A: Iterative methods for general regular system matrix A: MATLAB-functions: • [x,flg,res,it,resv] = minres(A,b,tol,maxit,B,[],x0); • [. ..] = minres(Afun,b,tol,maxit,Binvfun,[],x0); Idea: Given x (0) ∈ R n determine (better) approximation x (l) through Petrov- Galerkin condition Computational costs : 1 A×vector, 1 B −1 ×vector per step, a few dot products & SAXPYs Memory requirement: a few vectors ∈ R n Extension to general regular A ∈ R n,n : x (l) ∈ x (0) + K l (A,r 0 ): p H (b − Ax (l) ) = 0 ∀p ∈ W l , with suitable test space W l , dim W l = l, e.g. W l := K l (A H ,r 0 ) (→ biconjugate gradients, BiCG) Idea: Solver overdetermined linear system of equations x (l) ∈ x (0) + K l (A,r 0 ): Ax (l) = b in least squares sense, → Chapter 6. x (l) = argmin{‖Ay − b‖ 2 : y ∈ x (0) + K l (A,r 0 )} . Ôº¿ º Zoo of methods with short recursions (i.e. constant effort per step) Ôº¿ º Memory requirements: 8 vectors ∈ R n MATLAB-function: • [x,flag,r,it,rv] = bicgstab(A,b,tol,maxit,B,[],x0) • [...] = bicgstab(Afun,b,tol,maxit,Binvfun,[],x0); Computational costs : 2 A×vector, 2 B −1 ×vector, 4 dot products, 6 SAXPYs per step ➤ GMRES method for general matrices A ∈ R n,n MATLAB-function: • [x,flag,r,it,rv] = qmr(A,b,tol,maxit,B,[],x0) • [...] = qmr(Afun,b,tol,maxit,Binvfun,[],x0); MATLAB-function: • [x,flag,relr,it,rv] = gmres(A,b,rs,tol,maxit,B,[],x0); • [. ..] = gmres(Afun,b,rs,tol,maxit,Binvfun,[],x0); Computational costs : Memory requirements: 2 A×vector, 2 B −1 ×vector, 2 dot products, 12 SAXPYs per step 10 vectors ∈ R n Computational costs : 1 A×vector, 1 B −1 ×vector per step, : O(l) dot products & SAXPYs in l-th step Memory requirements: O(l) vectors ∈ K n in l-th step Remark 4.4.1 (Restarted GMRES). little (useful) covergence theory available stagnation & “breakdowns” commonly occur Example 4.4.2 (Failure of Krylov iterative solvers). After many steps of GMRES we face considerable computational costs and memory requirements for every further step. Thus, the iteration may be restarted with the current iterate x (l) as initial guess → rs-parameter triggers restart after every rs steps (Danger: failure to converge). △ Ôº¿ º ⎛ 0 1 0 · · · · · · 0 0 0 1 0 . . . .. ... ... A = ... . ⎜ . ... ... 0 ⎝0 0 1 1 0 · · · · · · 0 ⎞ ⎟ ⎠ ⎛ ⎞ 0. , b = ⎜ . ⎟ ⎝0⎠ 1 x = e 1 . Ôº¿ º

- Page 1 and 2:

2 Direct Methods for Linear Systems

- Page 3 and 4:

III Integration of Ordinary Differe

- Page 5 and 6:

Extra questions for course evaluati

- Page 7 and 8:

1.1.2 Matrices Matrices = two-dimen

- Page 9 and 10:

Remark 1.2.1 (Row-wise & column-wis

- Page 11 and 12:

1.3 Complexity/computational effort

- Page 13 and 14:

Syntax of BLAS calls: The functions

- Page 15 and 16:

4 { 5 a ssert ( this−>n==B. n &&

- Page 17 and 18:

34 long r t 0 ; 35 bool bStarted ;

- Page 19 and 20:

Obviously, left multiplication with

- Page 21 and 22:

❶: elimination step, ❷: backsub

- Page 23 and 24:

A direct way to LU-decomposition:

- Page 25 and 26:

Solution of LŨx = b: x ( ) 2ǫ = 1

- Page 27 and 28:

numerically equivalent ˆ= same res

- Page 29 and 30:

Code 2.4.8: Finding outeps in MATLA

- Page 31 and 32:

Terminology: Def. 2.5.5 introduces

- Page 33 and 34:

Example 2.5.5 (Instability of multi

- Page 35 and 36:

Note: sensitivity gauge depends on

- Page 37 and 38:

6 for i =1:20 7 n = 2^ i ; m = n /

- Page 39 and 40:

0 20 40 60 80 100 120 140 160 180 2

- Page 41 and 42:

Use sparse matrix format: 10 1 10 2

- Page 43 and 44:

Envelope-aware LU-factorization: 0

- Page 45 and 46: 0 20 40 60 80 100 0 20 0 20 0 20 De

- Page 47 and 48: Evident: symmetry of à − bbT a 1

- Page 49 and 50: 9 ylabel ( ’ { \ b f c o n d i t

- Page 51 and 52: Mapping a ∈ K n to a multiple of

- Page 53 and 54: Then store G ij (a,b) as triple (i,

- Page 55 and 56: Recall: e i ˆ= i-th unit vector Ch

- Page 57 and 58: Computation of Choleskyfactorizatio

- Page 59 and 60: 1 ∃ (partial) cyclic row permutat

- Page 61 and 62: Definition 3.1.3 (Local and global

- Page 63 and 64: Example 3.1.6 (quadratic convergenc

- Page 65 and 66: k |x (k) − π| L 1−L |x (k) −

- Page 67 and 68: (x (k) ) k∈N0 Cauchy sequence ➤

- Page 69 and 70: Termination criterion for contracti

- Page 71 and 72: Given x (k) ∈ I, next iterate :=

- Page 73 and 74: secant method ( MATLAB implementati

- Page 75 and 76: Assuming p = 1: p > 1: ∥ C ∥e (

- Page 77 and 78: This is a simple computation: DG(x)

- Page 79 and 80: k x (k) ǫ k := ‖x ∗ − x (k)

- Page 81 and 82: Code 3.4.14: Damped Newton method (

- Page 83 and 84: MATLAB-CODE: Broyden method (3.4.11

- Page 85 and 86: Algorithm 4.1.3 (Steepest descent).

- Page 87 and 88: Example 4.1.8 (Convergence of gradi

- Page 89 and 90: 4.2.1 Krylov spaces Definition 4.2.

- Page 91 and 92: Remark 4.2.3 (A posteriori terminat

- Page 93 and 94: 10 figure ; view ([ −45 ,28]) ; m

- Page 95: Idea: Solve Ax = b approximately in

- Page 99 and 100: For circuit of Fig. 55 at angular f

- Page 101 and 102: (Linear) generalized eigenvalue pro

- Page 103 and 104: 10 0 10 1 matrix size n d = eig(A)

- Page 105 and 106: 0 50 100 150 200 250 300 350 400 45

- Page 107 and 108: 1 2 3 k ρ (k) EV ρ (k) EW ρ (k)

- Page 109 and 110: ✬ ✩ ✬ ✩ Lemma 5.3.4 (Ncut a

- Page 111 and 112: In other words, roundoff errors may

- Page 113 and 114: Theory: linear convergence of (5.3.

- Page 115 and 116: error in eigenvalue 10 0 10 −2 10

- Page 117 and 118: ✬ Residuals r 0 ,...,r m−1 gene

- Page 119 and 120: Algebraic view of the Arnoldi proce

- Page 121 and 122: 5.5 Singular Value Decomposition Re

- Page 123 and 124: Illustration: columns = ONB of Im(A

- Page 125 and 126: ✬ Theorem 5.5.7 (best low rank ap

- Page 127 and 128: Reassuring: Remark 6.0.4 (Pseudoinv

- Page 129 and 130: Consider the linear least squares p

- Page 131 and 132: Goal: Euclidean distance of y ∈ R

- Page 133 and 134: 6.5 Non-linear Least Squares If (6.

- Page 135 and 136: 0 2 4 6 8 10 12 14 16 value of ∥

- Page 137 and 138: Definition 7.1.1 (Discrete convolut

- Page 139 and 140: Expand a 0 ,...,a n−1 and b 0 , .

- Page 141 and 142: (7.2.2) is a simple consequence of

- Page 143 and 144: Dominant coefficients of a signal a

- Page 145 and 146: 11 c = f f t ( y ) ; 12 13 figure (

- Page 147 and 148:

Two-dimensional trigonometric basis

- Page 149 and 150:

8 end 9 t1 = min ( t1 , toc ) ; 10

- Page 151 and 152:

Step II: for k =: rq + s, 0 ≤ r <

- Page 153 and 154:

MATLAB-CODE Sine transform function

- Page 155 and 156:

△ Example 7.5.2 (Linear regressio

- Page 157 and 158:

[23, Ch. IX] presents the topic fro

- Page 159 and 160:

Code 8.1.3: Horner scheme, polynomi

- Page 161 and 162:

1.2 equality in (8.2.10) for y := (

- Page 163 and 164:

ecursive definition: p i (t) ≡ y

- Page 165 and 166:

a 1 = y 1 − a 0 t 1 − t 0 = y 1

- Page 167 and 168:

Observations: Strong oscillations o

- Page 169 and 170:

−1 −0.8 −0.6 −0.4 −0.2 0

- Page 171 and 172:

8.5.3 Chebychev interpolation: comp

- Page 173 and 174:

9.1 Shape preserving interpolation

- Page 175 and 176:

9.2.2 Piecewise polynomial interpol

- Page 177 and 178:

Interpolation of the function: f(x)

- Page 179 and 180:

2 % Plot convergence of approximati

- Page 181 and 182:

9.4 Splines Definition 9.4.1 (Splin

- Page 183 and 184:

➤ Linear system of equations with

- Page 185 and 186:

y i+1 t i−1 t i t i+1 y i−1 y i

- Page 187 and 188:

35 h= d i f f ( t ) ; 36 d e l t a

- Page 189 and 190:

1 0.9 Function f 1 0.9 Function f

- Page 191 and 192:

f 10 Numerical Quadrature Numerical

- Page 193 and 194:

f • n = 1: Trapezoidal rule • n

- Page 195 and 196:

For fixed local n-point quadrature

- Page 197 and 198:

Equidistant trapezoidal rule (order

- Page 199 and 200:

Heuristics: A quadrature formula ha

- Page 201 and 202:

20 Zeros of Legendre polynomials in

- Page 203 and 204:

|quadrature error| 10 0 Numerical q

- Page 205 and 206:

f f • line 9: estimate for global

- Page 207 and 208:

Model: autonomous Lotka-Volterra OD

- Page 209 and 210:

Example 11.1.6 (Transient circuit s

- Page 211 and 212:

y y 1 y(t) y 0 t t 0 t 1 Fig. 133 e

- Page 213 and 214:

for discrete evolution defined on I

- Page 215 and 216:

⇒ if y ∈ C 2 ([0, T]), then y(t

- Page 217 and 218:

The implementation of an s-stage ex

- Page 219 and 220:

Example 11.5.2 (Blow-up). ✸ toler

- Page 221 and 222:

However, it would be foolish not to

- Page 223 and 224:

0 0.2 0.4 0.6 0.8 1 1.2 1.4 1.6 1.8

- Page 225 and 226:

4 3 2 abstol = 0.000010, reltol = 0

- Page 227 and 228:

✸ ✸ Motivated by the considerat

- Page 229 and 230:

0 1 2 3 4 5 6 u(t),v(t) 0.01 0.008

- Page 231 and 232:

MATLAB-CODE : Explicit integration

- Page 233 and 234:

Shorthand notation for Runge-Kutta

- Page 235 and 236:

13 Structure Preservation 13.1 Diss

- Page 237 and 238:

[18] G. GOLUB AND C. VAN LOAN, Matr

- Page 239 and 240:

linear in Gauss-Newton method, 538

- Page 241 and 242:

Chebychev nodes, 678 double, 634 fo

- Page 243 and 244:

x∗ n y ˆ= discrete periodic conv

- Page 245 and 246:

Gaussian elimination with pivoting