Numerical Methods Contents - SAM

Numerical Methods Contents - SAM

Numerical Methods Contents - SAM

Create successful ePaper yourself

Turn your PDF publications into a flip-book with our unique Google optimized e-Paper software.

0 0.2 0.4 0.6 0.8 1 1.2 1.4 1.6 1.8 2<br />

0.05<br />

0.045<br />

Solving d t<br />

y = a cos(y) 2 with a = 40.000000 by simple adaptive timestepping<br />

Error vs. no. of timesteps for d t<br />

y = a cos(y) 2 with a = 40.000000<br />

10 1 no. N of timesteps<br />

uniform timestep<br />

adaptive timestep<br />

Observations:<br />

y<br />

0.04<br />

0.035<br />

0.03<br />

0.025<br />

0.02<br />

0.015<br />

rtol = 0.400000<br />

0.01<br />

rtol = 0.200000<br />

rtol = 0.100000<br />

rtol = 0.050000<br />

0.005<br />

rtol = 0.025000<br />

rtol = 0.012500<br />

rtol = 0.006250<br />

0<br />

t<br />

Fig. 150<br />

Solutions (y k ) k for different values of rtol<br />

max k<br />

|y(t k<br />

)−y k<br />

|<br />

10 0<br />

10 −1<br />

10 −2<br />

10 −3<br />

10 −4<br />

10 −5<br />

10 1 10 2 10 3<br />

Error vs. computational effort<br />

Fig. 151<br />

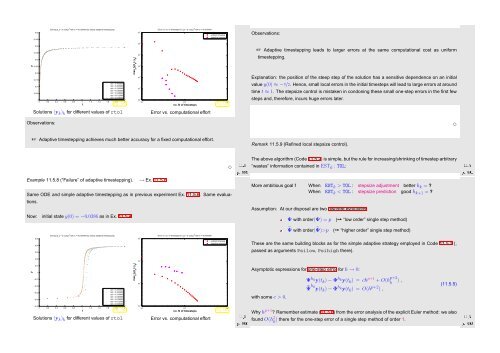

☞ Adaptive timestepping leads to larger errors at the same computational cost as uniform<br />

timestepping.<br />

Explanation: the position of the steep step of the solution has a sensitive dependence on an initial<br />

value y(0) ≈ −π/2. Hence, small local errors in the initial timesteps will lead to large errors at around<br />

time t ≈ 1. The stepsize control is mistaken in condoning these small one-step errors in the first few<br />

steps and, therefore, incurs huge errors later.<br />

Observations:<br />

✸<br />

☞ Adaptive timestepping achieves much better accuracy for a fixed computational effort.<br />

Ôº ½½º<br />

✸<br />

Remark 11.5.9 (Refined local stepsize control).<br />

The above algorithm (Code 11.5.2) is simple, but the rule for increasing/shrinking of timestep arbitrary<br />

“wastes” information contained in EST k : TOL:<br />

Ôº½ ½½º<br />

Example 11.5.8 (“Failure” of adaptive timestepping). → Ex. 11.5.6<br />

Same ODE and simple adaptive timestepping as in previous experiment Ex. 11.5.6. Same evaluations.<br />

Now: initial state y(0) = −0.0386 as in Ex. 11.5.4<br />

More ambitious goal ! When EST k > TOL : stepsize adjustment better h k = ?<br />

When EST k < TOL : stepsize prediction good h k+1 = ?<br />

Assumption: At our disposal are two discrete evolutions:<br />

Ψ with order(Ψ) = p (➙ “low order” single step method)<br />

˜Ψ with order(˜Ψ)>p (➙ “higher order” single step method)<br />

Solving d t<br />

y = a cos(y) 2 with a = 40.000000 by simple adaptive timestepping<br />

Error vs. no. of timesteps for d t<br />

y = a cos(y) 2 with a = 40.000000<br />

0.05<br />

0.04<br />

0.03<br />

uniform timestep<br />

adaptive timestep<br />

These are the same building blocks as for the simple adaptive strategy employed in Code 11.5.2 (,<br />

passed as arguments Psilow, Psihigh there).<br />

0.02<br />

10 −1<br />

y<br />

0.01<br />

0<br />

−0.01<br />

−0.02<br />

−0.03<br />

−0.04<br />

rtol = 0.400000<br />

rtol = 0.200000<br />

rtol = 0.100000<br />

rtol = 0.050000<br />

rtol = 0.025000<br />

rtol = 0.012500<br />

rtol = 0.006250<br />

−0.05<br />

0 0.2 0.4 0.6 0.8 1 1.2 1.4 1.6 1.8 2<br />

t<br />

Fig. 152<br />

Solutions (y k ) k for different values of rtol<br />

max k<br />

|y(t k<br />

)−y k<br />

|<br />

10 0 no. N of timesteps<br />

10 −2<br />

10 −3<br />

10 1 10 2 10 3<br />

Error vs. computational effort<br />

Fig. 153<br />

Ôº¼ ½½º<br />

Asymptotic expressions for one-step error for h → 0:<br />

with some c > 0.<br />

Ψ h ky(t k ) − Φ h ky(t k ) = ch p+1 + O(h p+2<br />

k ) ,<br />

˜Ψ hk y(t k ) − Φ h ky(t k ) = O(h p+2 ) ,<br />

(11.5.5)<br />

Ôº¾<br />

Why h p+1 ? Remember estimate (11.3.6) from the error analysis of the explicit Euler method: we also<br />

found O(h 2 k ) there for the one-step error of a single step method of order 1.<br />

½½º